For the past 20 years, our team has been building an ecosystem around technologies we believe are bringing superpowers to the people: augmented reality, virtual reality and wearable tech. We believe these technologies are making us better at everything we do and will overtake personal computing as the next platform. To help breed these superpowers, we are launching Super Ventures, the first incubator and fund dedicated to augmented reality.

“Establishing an investment fund and incubator furthers our influence in the AR space by allowing us to invest in passionate entrepreneurs and incubate technologies that will become the foundation for the next wave of computing,” said Super Ventures Founder and GM, Ori Inbar.

Today we are announcing our inaugural fund of $10 million along with initial investments in several AR companies including Waygo, a visual translation app specializing in Asian languages, and Fringefy, a visual search engine that helps people discover local content and services. Our fund will also invest in technologies enabling AR, and we are separately backing an unannounced company developing an intelligence system to crowdsource visual data.

“I am extremely excited to be working with Super Ventures,” said Ryan Rogowski, CEO of Waygo. “The Super Ventures team has an impressive amount of operational experience and deep connections in the AR community that are sure to help Waygo reach its next milestones!”

“We feel each and every Super Ventures team member provides substantial advantages to Fringefy from technical aspects, to the user experience, and business strategy,” said Assif Ziv, Co-founder of Fringefy. “We are excited to tap into the Super Ventures team’s immense network and experience.”

“Super Ventures is building an ecosystem of companies enabling the technology that will change how computers — and humans — see and interact with the world,” said the CEO & co-founder of the unannounced company. “We are truly excited to be part of this brain trust, and to leverage the network and expertise of the Super Ventures team.”

The Super Ventures partnership is made up of Ori Inbar, Matt Miesnieks, Tom Emrich and Professor Mark Billinghurst.

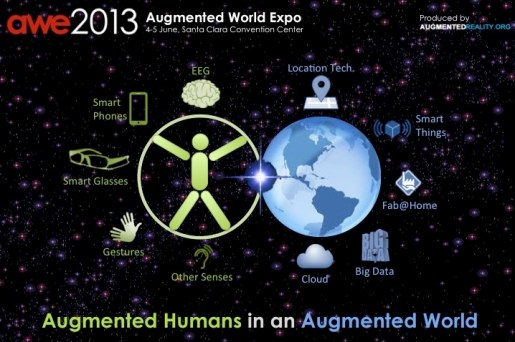

Ori Inbar has devoted the past 9 years to fostering the AR ecosystem. Prior to Super Ventures he was the co-founder and CEO of AR mobile gaming startup Ogmento (now Flyby Media — acquired by Apple). He is also the founder of AugmentedReality.org, a non-profit organization on a mission to inspire 1 billion active users of augmented reality by 2020 and the producer of the world’s largest AR, VR and wearable tech event in Silicon Valley and Asia, AWE. Ori advises dozens of startups, corporations, and funds about the AR industry.

Matt Miesnieks most recently led an AR R&D team at Samsung and previously was co-founder & CEO of Dekko, the company that invented 3D AR holograms on iOS. Prior to this, Matt led worldwide customer development for mobile AR browser Layar (acquired by Blippar), and held executive leadership roles at Openwave and other mobile companies.

Tom Emrich is the founder of We Are Wearables, the largest wearable tech community of its kind, which has become the launch pad for a number of startups. He is also the co-producer of AWE. Tom is well known for his analysis and blogging on wearable tech as one of the top influencers in this space. He has used this insight to advise VCs, startups and corporations on opportunities within the wearable tech market.

Professor Mark Billinghurst is one of the most recognized researchers in AR. His team developed the first mobile AR advertising experience, first collaborative AR game on mobile devices, and early visual authoring tools for AR, among many other innovations. He founded the HIT Lab NZ, a leading AR research laboratory and is the co-founder of ARToolworks (acquired by DAQRI), which produced ARToolKit, the most popular open source AR tracking library. In the past he has conducted innovative interface research for Nokia, the MIT Media Lab, Google, HP, Samsung, British Telecom and other companies.

“The next 12–18 months will be seen as the best opportunity to invest in augmented reality,” said Matt Miesnieks. “Our goal is to identify early-stage startups developing enabling platforms and provide them with necessary capital and mentorship. Our guiding light for our investment decisions is our proprietary industry roadmap we’ve developed from our combined domain expertise. The companies we select will be the early winning platforms in the next mega-wave of mobile disruption.”

“Our team will take a hands-on approach with the startups, leveraging our industry experience, research knowledge and networks to help them meet their goals while part of the incubator,” added Professor Mark Billinghurst.

“Unique to Super Ventures is the community we have fostered which we will put to work to help support our startups,” said Tom Emrich. “Our community of over 150,000 professionals not only gives us access to investment opportunities early on, it also offers a vast network of mentors, corporate partners and investors which our startups can rely on to succeed.”

Join us in bringing superpowers to the people.

Filed under: Uncategorized | 6 Comments »