In my pursuit of the ultimate augmented reality game, Jonas Hielscher popped on my radar. Jonas recently completed a residence at the Better Than Reality project for artists at the V2_Lab. I couldn’t resist asking him about his experience. Here are the results of the discussion.

games alfresco: Hey Jonas, thanks for taking a break from your art and joining us for a quick conversation; how about a quick introduction?

Jonas: Hi Ori, thanks for your interest in my work!

About me: I am a German artist, working as an assistant professor at the Academy of Media Arts Cologne in the field of 3D and interaction. In my artistic work I develop experimental games and media installations that explore the boundaries between the real and the virtual world (portfolio)

This year, my specific focus lays in exploring the possibilities of augmented reality. At the beginning of this year I gave an augmented reality game workshop at Mediamatic in Amsterdam, together with Julian Oliver. There we helped the participants develop small game ideas with ArtoolKit: a software library for building Augmented Reality applications using physical tracking markers.

Mediamatic Workshop

Back in Cologne, my students at the Art Academy were so enthusiastic about the results, that they also developed a small ArtoolKit project for the annual school exhibition.

Cologne Art Academy School project

And now I just finished 6 weeks of intensive work with the Augmented Reality system, they developed in V2_lab, Rotterdam.

V2_Lab Residency: Last Pawn

games alfresco: Tell us a little bit about the Better Than Reality project, how did it start? What was its objective?

Jonas: Two years ago V2_lab started developing the first version of their augmented reality system in collaboration with the artist Marnix de Nijs (NL). That resulted in the first public user test of a project called Exercise in Immersion 4 during the Dutch Electronic Art Festival 2007 (DEAF07).

After this presentation they went on refining the system and invited three artists-in-residence. They where chosen to explore various sub topics: Marnix de Nijs (NL) will continue his Exercise in Immersion 4 project, Boris Debackere (BE) researches spatial sound, and my focus was on 3D and visual aspects of augmented reality. In the fall of 2008, a series of workshops will be given, open to (art) students, artists and other professionals who are interested in artistic use of augmented reality.

The system V2_Lab has developed a software/hardware platform called VGE (V2_ Game Engine), based on Ogre3D with Scheme, OpenAL, Blender, ultrasound positioning and SIOS (Sensor Input/Output System developed at V2_Lab). The user wears a head-mounted display that shows a mix of 3D visuals and real world video taken by a head mounted camera.

Within this framework, I developed two small experimental projects during my residency. Inspired by the novel ‘Through the looking glass’ by Lewis Carroll, I wanted to create a dreamlike mixed reality environment, where real and virtual merge in an absurd experience. An important aspect for me was the connection between the virtual and the real elements.

In the first project, Last Pawn, a real table with a chess pawn stands in the middle of the room. Around the table are three virtual windows hanging in the air and a virtual avatar stands in the middle of the room. When the participant moves the pawn on the table, the avatar walks to a position in space. If the avatar ends up in front of a window, he opens it and a new virtual space appears behind it. Like this, different spaces and atmospheres can be experienced. Additional real theater light is controlled by the system to support the experience.

Last Pawn 1

Last Pawn 2

Last Pawn 3

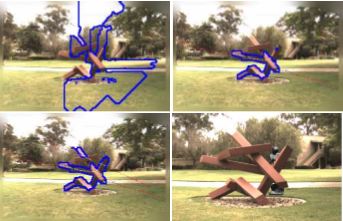

The second project Human Sandbox was developed as a side experiment. Here, one participant equipped with the AR-system stands in the middle of the room, while another participant places several physical objects on the table. They track and manipulate the virtual objects seen by the other participant. With this system we were able to test and experiment with different objects much easier. An interesting result with this approach was that game logic could be developed without having to program it. You could play ‘Hide and Seek’ , just by putting and moving objects on the table.

Residency Sandbox

games alfresco: what was your first encounter with augmented reality?

Jonas: During the ARS Electronica Festival 2004, I saw several augmented reality projects. One very interesting piece was Augmented Fish Reality by Ken Rinaldo. Instead of augmenting the reality of a human, Ken augments the reality of a fish. Here, five Siamese fighting fishes are living in separated rolling robotic fish-bowls controlled by the fish themselves. The fish can steer the fish-bowl and come closer to other fish. They can see and communicate with each other, but always have to stay in their own fish-bowl. It is fascinating to watch and observe the fish’s behavior. You could say they were augmenting their own reality.

games alfresco: in the future, AR is poised to have a major role in the way we interact with the world; what does it mean for the future of art?

Jonas: In our high technological western world with ubiquitous computing and the so-called “Internet of Things”, where RFID and other embedded applications are part of our daily environment, we can question if we already live in augmented realities. The augmentation of reality has become invisible through all kinds of services, like for mobile phones or our biometric passports.

I think, one goal of artists working with these technologies should be to make things visible again. So that the effect of it can be reflected and discussed.

games alfresco: AR seems to get more and more attention in the art world, what do you think has captured the imagination of artists?

Jonas: I think one major fascination is to be able to directly manipulate the experience of the real world to comment, reflect or just create new mixed reality experiences. It is also the fascination about the ability to give more information about people, objects and places, that would be normally concealed by their static structure.

games alfresco: More and more AR demos are surfacing in YouTube and elsewhere; what’s your favorite augmented reality demo?

Jonas: Definitely, Julian Oliver’s game Levelhead. It is the beauty of a simple but very genius idea. In the game the player holds a solid cube in front of a camera and on-screen it appears that each face of the cube contains a little room. The objective of the game is to navigate a character from room to room by tilting the cube. It is the little magic of holding this small universe in your hands, that really fascinates me.

games alfresco: what would be your dream augmented reality device (way to interact with AR)?

Jonas: During my residency I noticed, that I am not a fan of head-mounted displays. It is always the lack of technology you experience (small Field of View, Frame Rate, heavy equipment, etc.). With HMD you feel somehow amputated by giving up your perfect view of your eyes and diving completely into the augmented reality. Personally I belief more in the idea of having a window (like a small hand-held) to the augmented world. Then you are free to choose and compare between the real and augmented world.

games alfresco: Totally agree. As you are winding down the residence at Better than Reality – what are you envisioning as your next project?

Jonas: At the moment I have lots of new ideas and inspirations. I have some new ideas for the game CollecTic, that I developed in 2006. I really want to develop a new and different version of it for the iPhone, which I think is a really great platform to develop mobile mixed reality projects for. The initial idea of CollecTic is, that you go hunting for Wifi-Hotspots in your neighborhood and play a kind of a puzzle game. What I really like about the game is that you discover the hidden infrastructure of the wireless network coverage through playing. The game also generates auditive and visual feedback of the hot spots. So hopefully I’ll find some time to give the game another boost for the iPhone with some new gameplay ideas.

Furthermore I have some new ideas about other augmented reality projects, but they are still on the cooking pan :-)

games alfresco: Wow. Thank you, Jonas. This has been an eye-popping-mind-blowing interview. Can’t wait to see your video documentation of the Better Than Reality project.

***update***

Jonas was able to upload clips of his work: take a look

Filed under: AR ART | Tagged: Augmented Fish Reality, Better Than Reality Project, Boris Debackere, CollecTic, Exercise in Immersion 4, Human Sandbox, Jonas Hielscher, Last Pawn, LevelHead, Marnix de Nijs, Oliver Julian, V2_ Game Engine, V2_Lab | 5 Comments »